6.2 KiB

Practical breakdown of embeddings: how they work, how they’re trained, and concrete examples you can actually implement.

🧠 What embeddings actually are (intuitively + formally)

At the core:

-

An embedding is a function [ f(x) \rightarrow \mathbb{R}^d ]

-

It maps raw data (text, image, etc.) into a dense vector.

Key property:

-

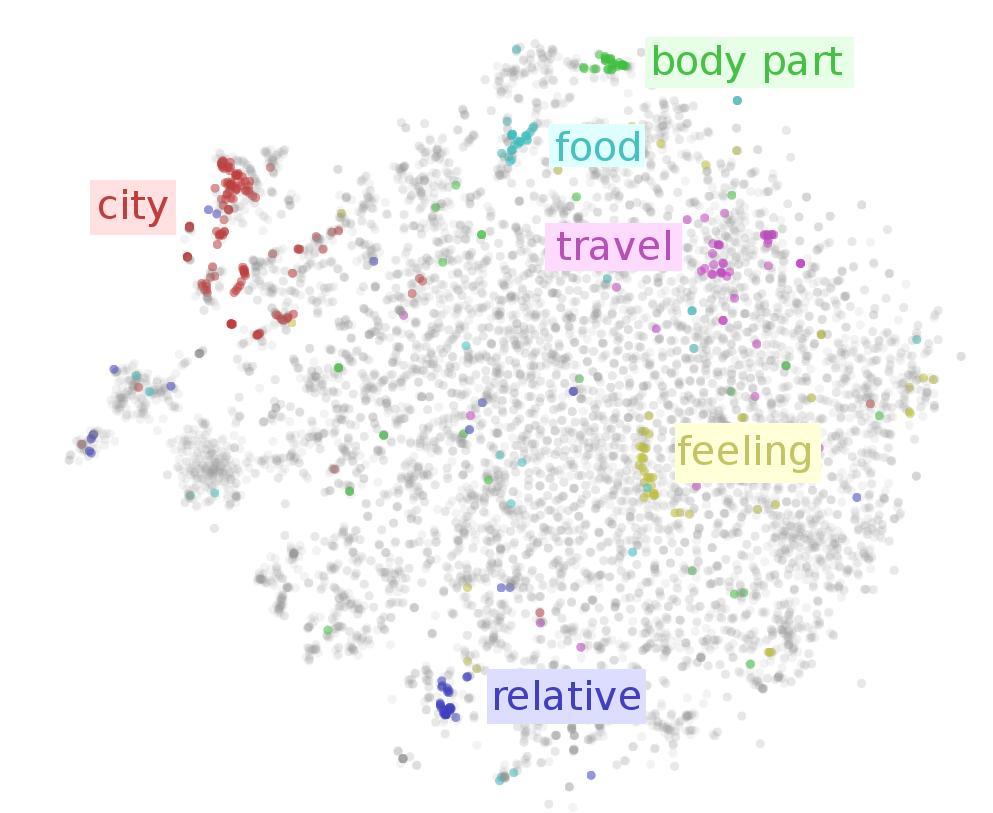

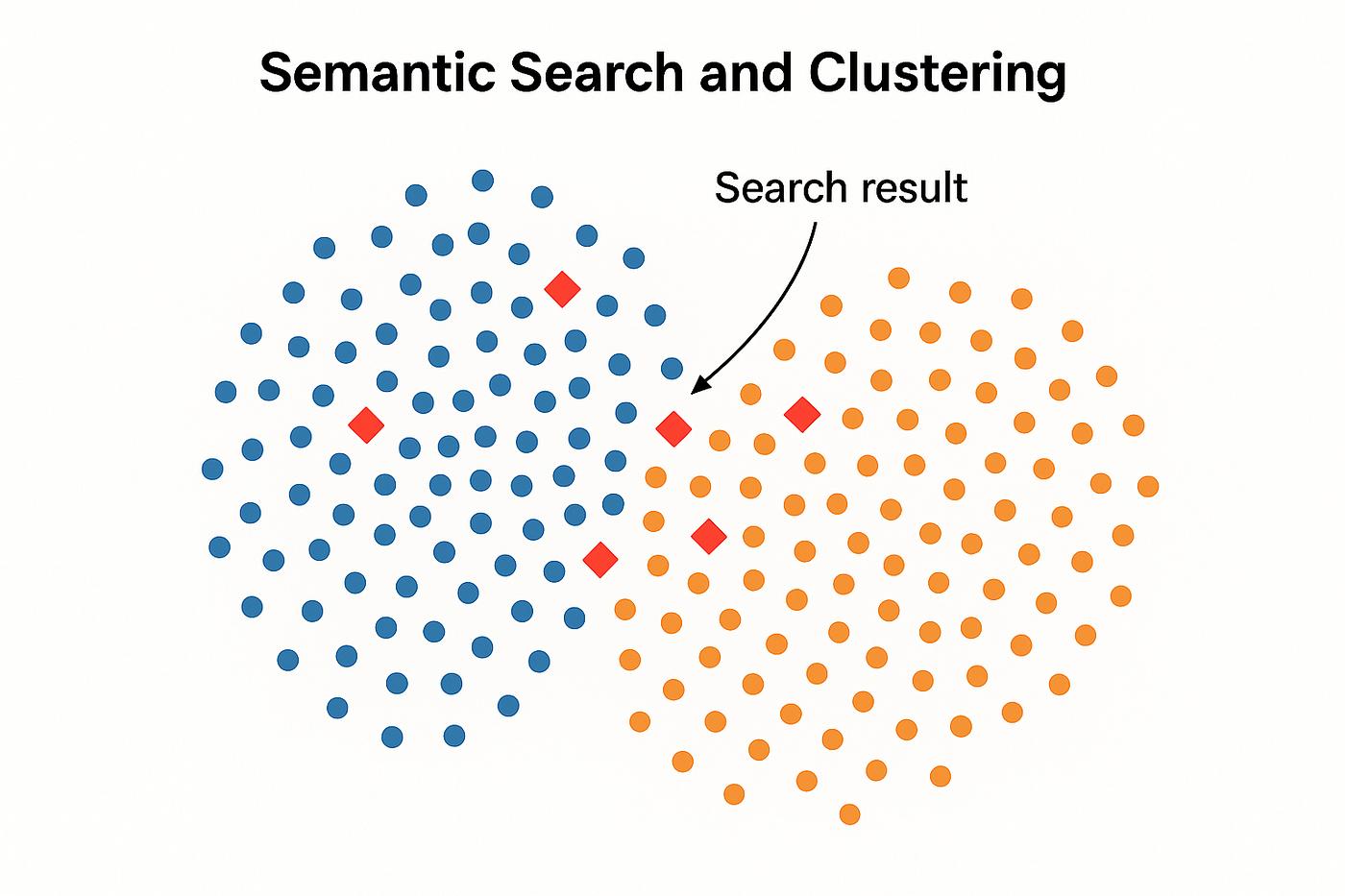

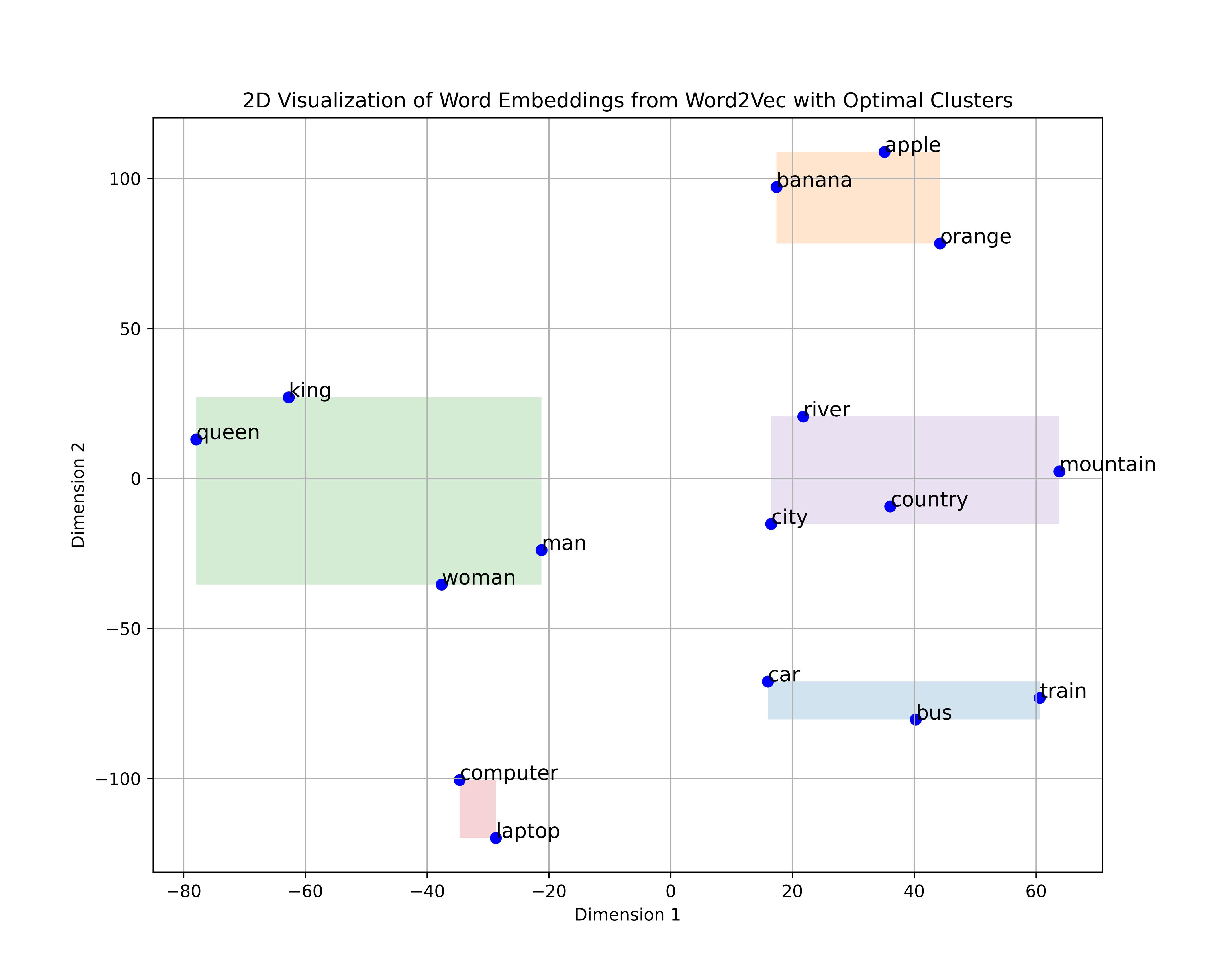

Semantic structure is encoded as geometry

- Similar things → vectors close together

- Different things → far apart

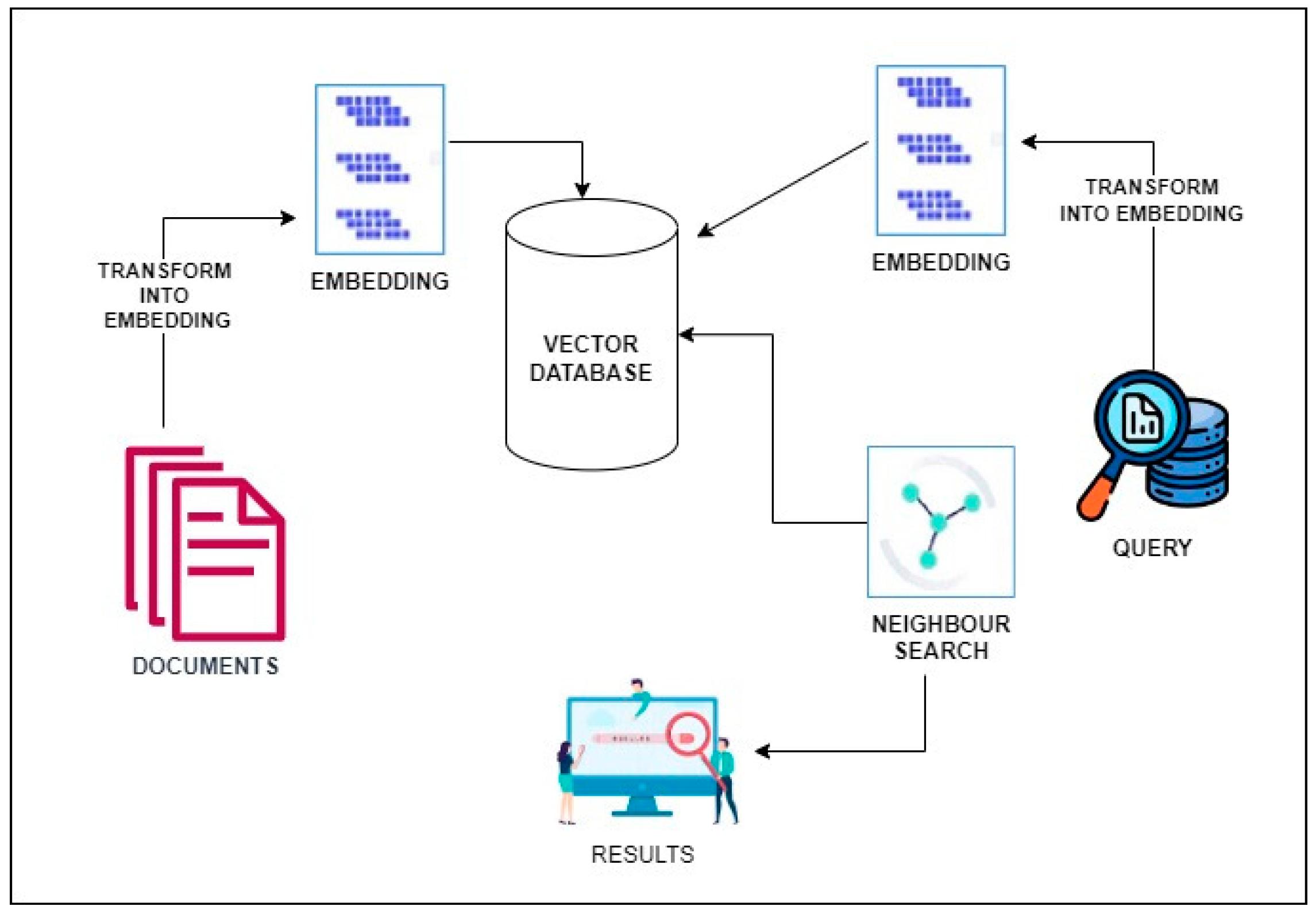

This is the core idea behind vector search, RAG, clustering, etc. (ibm.com)

⚙️ Why embeddings work (the underlying theory)

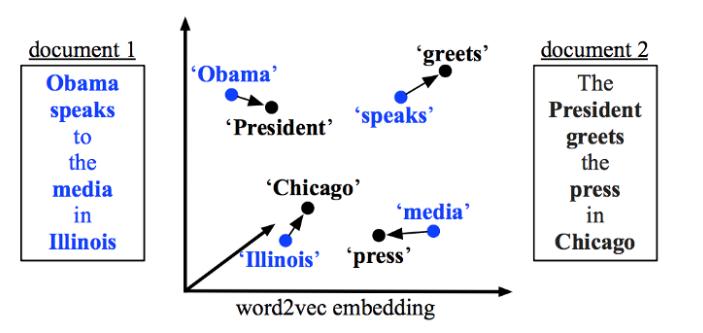

1. Distributional hypothesis

“You shall know a word by the company it keeps”

- Words appearing in similar contexts → similar vectors

- This is the foundation of Word2Vec, GloVe, BERT, etc. (ibm.com)

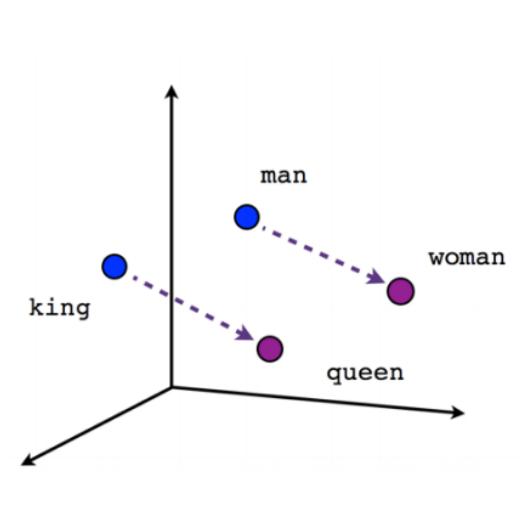

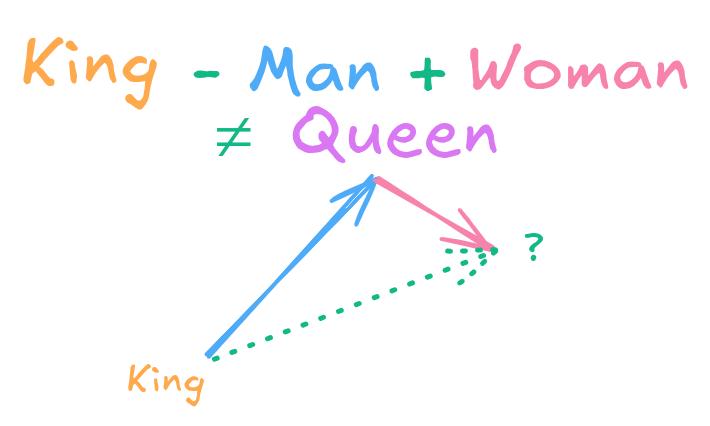

2. Geometry encodes meaning

Classic example:

king - man + woman ≈ queen

This works because relationships become linear directions in vector space.

3. Dense vs sparse representations

| Method | Problem |

|---|---|

| One-hot | huge, no meaning |

| TF-IDF | frequency only |

| Embeddings | compact + semantic |

Embeddings are low-dimensional but information-rich representations. (GeeksforGeeks)

🏗️ How embeddings are trained (core methods)

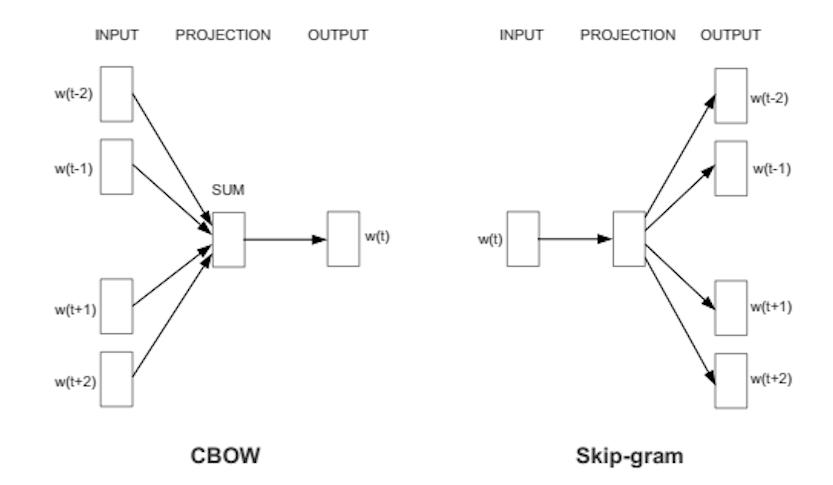

1. Prediction-based models (most important)

Word2Vec (classic foundation)

Two main training strategies:

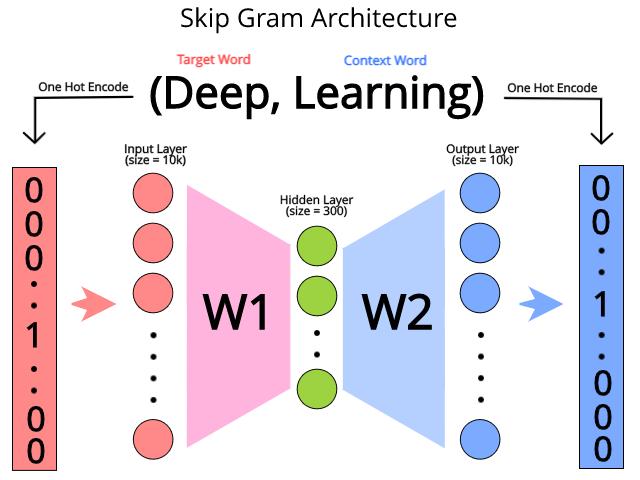

(a) Skip-gram

Predict context from a word:

[ P(context \mid word) ]

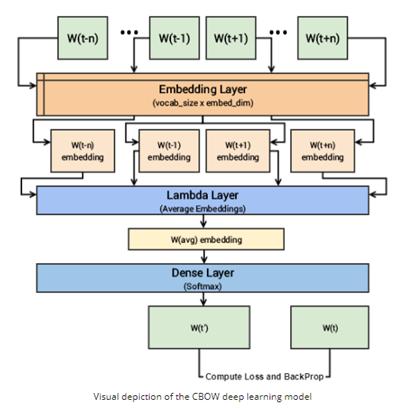

(b) CBOW

Predict word from context:

[ P(word \mid context) ]

Mechanism:

- Input = one-hot vector

- Hidden layer = embedding

- Train via gradient descent

👉 The embedding is literally the weights of the hidden layer (Medium)

Example (Skip-gram training loop)

# pseudo-code

for word in corpus:

context = get_context_window(word)

loss = -log P(context | word)

update_weights()

2. Matrix factorization (GloVe)

Instead of prediction:

- Build co-occurrence matrix

- Factorize it into lower dimensions

Captures global statistics, not just local context. (Medium)

3. Neural embedding layers (modern approach)

Used in:

- Transformers (BERT, GPT)

- Recommender systems

Mechanism:

- Embedding = lookup table

- Trained jointly with model

embedding = torch.nn.Embedding(vocab_size, dim)

vector = embedding(token_id)

4. Contrastive learning (modern SOTA)

Used in:

- sentence embeddings

- CLIP (image-text)

- OpenAI embeddings

Core idea:

[ \text{similar pairs} \rightarrow \text{closer} ] [ \text{different pairs} \rightarrow \text{farther} ]

Loss function:

[ \mathcal{L} = -\log \frac{e^{sim(x_i, x_j)}}{\sum_k e^{sim(x_i, x_k)}} ]

🔬 How modern embeddings (LLMs) differ

Older:

- static embeddings (Word2Vec)

Modern:

- contextual embeddings

Example:

- “bank” (river vs finance) → different vectors

This is why models like BERT/GPT outperform Word2Vec.

🧪 Practical training examples

Example 1 — Train Word2Vec (Gensim)

from gensim.models import Word2Vec

sentences = [["cat", "sat", "mat"], ["dog", "sat", "floor"]]

model = Word2Vec(sentences, vector_size=100, window=5, min_count=1)

vector = model.wv["cat"]

Example 2 — Train embeddings in PyTorch

import torch

import torch.nn as nn

embedding = nn.Embedding(10000, 128) # vocab, dim

input_ids = torch.tensor([1, 5, 23])

vectors = embedding(input_ids)

Example 3 — Train contrastive embeddings

# pseudo

anchor = model(text1)

positive = model(text2)

negative = model(text3)

loss = contrastive_loss(anchor, positive, negative)

Example 4 — PCA reduction (your earlier question)

from sklearn.decomposition import PCA

pca = PCA(n_components=256)

X_reduced = pca.fit_transform(X)

📊 Types of embeddings

| Type | Example |

|---|---|

| Word | Word2Vec, GloVe |

| Sentence | SBERT |

| Document | Doc2Vec |

| Image | CLIP |

| Graph | Node2Vec |

| Multimodal | CLIP, Gemini |

🧩 Key properties you should care about (engineering perspective)

1. Dimensionality

- Typical: 128–1536

- Tradeoff: memory vs accuracy

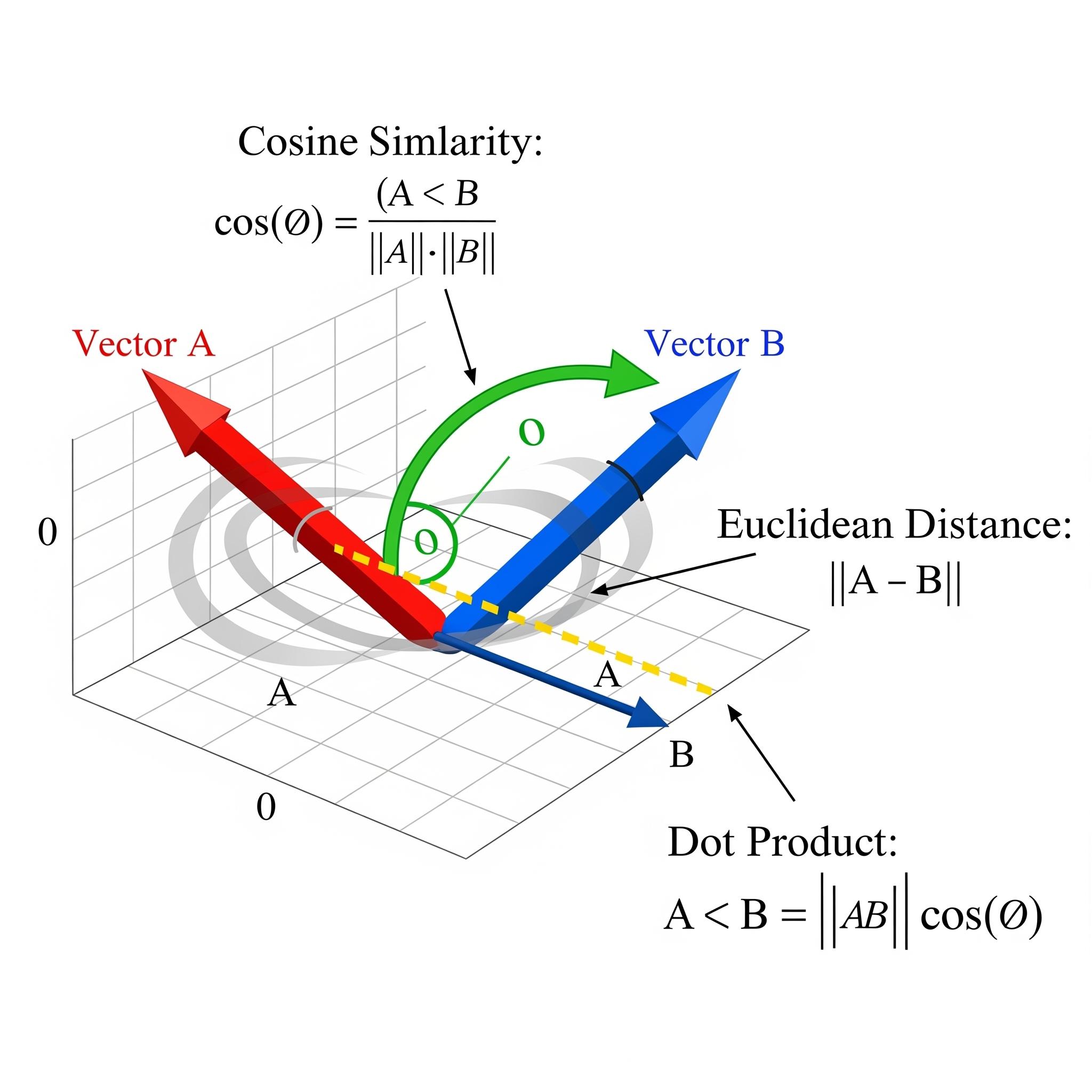

2. Distance metric

- cosine similarity (most common)

- dot product

- Euclidean

3. Normalization

Critical for:

- search quality

- clustering

4. Training data distribution

Embeddings are only as good as:

- corpus size

- domain relevance

⚠️ Common pitfalls (important)

❌ Mixing embedding spaces

- embeddings from different models are not compatible

❌ Assuming linear compression is harmless

- PCA can distort semantic relationships

❌ Ignoring normalization

- cosine similarity breaks without it

❌ Using embeddings without evaluation

Always test:

- retrieval accuracy

- clustering quality

🧠 Mental model (most useful takeaway)

Think of embeddings as:

A learned coordinate system where meaning = position

Training = learning that coordinate system so that:

- similar things cluster

- relationships become directions